If you have seen a learning system, any system, at a trade show, such as LTUK, or ATD (coming up), or on the web, or in your dreams, you start to notice a retread. I saw it, and continue to see it. Not only is this retread around UI/UX, but the pitches, spin, approaches, reasons why, etc. It gets to the point where ad nauseam sets in. Or heartburn.

There are systems that do not follow this path, at least UI/UX wise, but the pitch, spin, reason why, and blah blah blah, is redundant.

Generative AI is going to slide into this bucket of blah blah. It’s new. It’s exciting, and if you start to ask a lot of questions, it will be sidestepped, OR the same blah blah of why will be placed in front of your eyes and ears. It wouldn’t surprise me if the majority of vendors who go with Gen-AI (and eventually, they all must, or they will be too far behind), use GPT (by OpenAI). Maybe today it is ChatGPT (cheaper token-wise). Or GPT4 (expensive token-wise) or the next version of GPT. With so many Gen-AI Learning Language models, including open source ones, you would think someone would be “let’s go this way,” rather than everyone else’s way. I’m already seeing the sidestep I wrote about just a week ago, in order for vendors to reduce cost (those tokens). No surprise there, and yes, some won’t, but how much can you eat, without paying for it (in the end)?

Have you heard this one before?

- We created this system because we talked to people/people told us/we looked at the industry and saw that something needed to be done/changed. L&D needs and requirements weren’t being met. People hate their learning system (okay, they use the type, often LMS).

- People want an LXP or whatever they spin here.

- People (those folks again) want self-directed learning. Bite-sized learning.

- Their system isn’t delivering what they need.

OR any of these?

- We listened/talked to researchers/ experts from big-name universities that everyone oohs and ahhs about and found that their research showed that learning is best when it is blah blah.

- We found that people do not have time to learn so we did this.. (whatever that is)

- We added our learning models based on research from ABCD or a Kangaroo named Joey (actually, I would be impressed with the Kangaroo)

The above are the common blah blahs. There are far more, and if you walk around a show, or listen to a vendor’s salesperson, you will notice it all becomes white noise. It reminds me of the old Peanuts cartoons when the teacher talked and you couldn’t understand what they were saying.

The Systems Themselves

If I see one more “Grid,” “Netflix-like,” “block-format or widget format that appears as blocks,” I am going to scream to myself. It becomes a blur. Take a look the next time you check our systems, how many have the same UI for the learner. It is unbelievable how bad it has become. I can’t decide if people – looking to buy a system – aren’t paying attention, are indifferent, or think that this look is far different than that look.

Skills look the same too. Everything is becoming too commonplace.

The administration side is becoming a blur too. The same UI for graphs – bar charts, pie charts, trend lines that go up and down with little dots indicating whatever. You can barely read the information – no worry, it is data visualization if your idea of data visualization is 2000 or Excel 1996. Pie charts? Bar Graphs? If anybody with Excel can replicate your design, that isn’t something you want. I mean, if you do a bit of VBA or use some formulas, you can actually surpass some of these vendors’ data designs.

Overwhelmingly the back-end (admin side) is brutal. Yowsa, I get a headache just thinking about it. Easy to use? For who – Einstein? Simplified way more than needed? Yes, because G-D forbid, a design that delivers effectively and efficiently isn’t required, so let’s go with more of a pictograph look.

I’ll be upfront here, that some vendors do not fit the above categories around the systems section. The admin side is efficient and designed accordingly, without appearing irrelevant for the admin needs, just as the learner side doesn’t look like everyone else. Rather than be applauded for it, though, they can get zinged by folks because it doesn’t look like the usual design you see elsewhere.

Those vendors who pitch “LXP” are consumed with the idea that a grid, playlist, or combo, and a content/course marketplace constitute an LXP. The reality is far from that, but no matter because many people looking for an LXP are unaware of the specs – of how it was designed and what it entailed, and find it comforting that it shows and does that. The vendor, in turn, some that are, I question whether they even cared to know what an LXP is (or was), and instead just went this way because “If I don’t know, who is going to ask?” – A funny aspect, since many vendors do not add functionality because their clients or prospects never ask. What’s good for the goose doesn’t apply to the gander.

What should be done then, you ask?

Refresh your UI and UX

Yes it is daunting. Yes, it will cost you money, but you are in the business to make money – at least you should be. But it has to be done.

- If your Learner UI/UX has not been refreshed in the last three years, it must be. SaaS offers advantages; the last thing you want is the disadvantage of dated look and appearance. There are vendors that do touch-ups on the learner side. Touch up the catalog. Touch-up this screen or that screen, but never a full revamp. I often hear that their clients aren’t asking for it (that again), or that by going piece by piece it won’t shock people or impact them too much.

Will some people freak out due to a total new revamp? Absolutely, but if you notify them ahead of time with the rollout, schedule training sessions for the admins and those in L&D, Training, or wherever the system sits (a lot for customer training are appearing in Marketing – I wasn’t aware any of those folks have an L&D or Training background). Yet, overwhelmingly, people like new looks and appearances.

If you doubt it, take a look at anyone who has a new car or does upgrading to their flat or house or yard or some other design decision. If you change the paint of your residence or add some new flair, it’s because you want a new look. If you purchased a home from the ’80s or even the 90’s, I surmise you did some updating. It’s funny how many homes from that timeframe look so dated—even homes built in the early 2000s. I have even seen people change up their Halloween decorations or Christmas lights. People, in this instance, like change – something new and refreshing. So, why are learning system vendors avoiding it when many of those employees behind the scenes are doing the same thing many of us are doing this week or next month, or in the coming year with our place, decorations, or even our car?

The majority of your user base will like the new refresh; heck, some will love it. You can’t please everyone. On the admin side, ditto. Restructure it, reformat, redesign, and create an effective yet efficient look pleasing to the eyes. Avoid the following pitfalls when you are changing your design for the learner/admin side:

- Talk to the power users – i.e., clients. They are going to like whatever, and many will want pie in the sky, which you know won’t happen anyway or if you do, then you will go down the rabbit hole. They love your system to begin with. Rarely do they talk to those clients who are not using the system as often as expected.

- The Focus Group. There is data out there that state Focus groups are ineffective. Plus, who are these people anyway? Oh wait, typically, the most prominent clients you have (by user base or by name), and if they are lucky, some smaller-size clients. Usually, just like the power users, they slide into the very Large Enterprise.

- Ignoring your expertise. When you reach out to your clients for your new refresh, this tells me you lack confidence in your design ideas. I cannot recall a car designer ever reaching out to folks who own that car, asking them what the design should look like or what features they want or need. For those who need to do the latter, around the functionality, you can wait – because you do it anyway when it comes to your roadmap – plus, I thought you were the experts here. Or are the clients, usually the very large or mid-sized enterprises, the experts? I get this is uncharted territory here, but you are afraid to take the risk – what are you doing selling a solution or implying that the people you have hired are the experts but not experts when it comes to a refreshed design?

Who has a design that is different from everyone else?

There are quite a few out there, but the ones that stand out by far are

- Biz Skills

- Cognota (Albeit they are a learning operations system, so it should be different)

- Odilo (but that is mainly the main screen, after that, it gets brutal)

- Fuse (some refreshing is needed)

- NovoEd

- Cornerstone (a new look on the learner side and some on the admin side)

- Eurekos (and more is coming)

- Learn Amp

- Degreed (but some refreshing is needed)

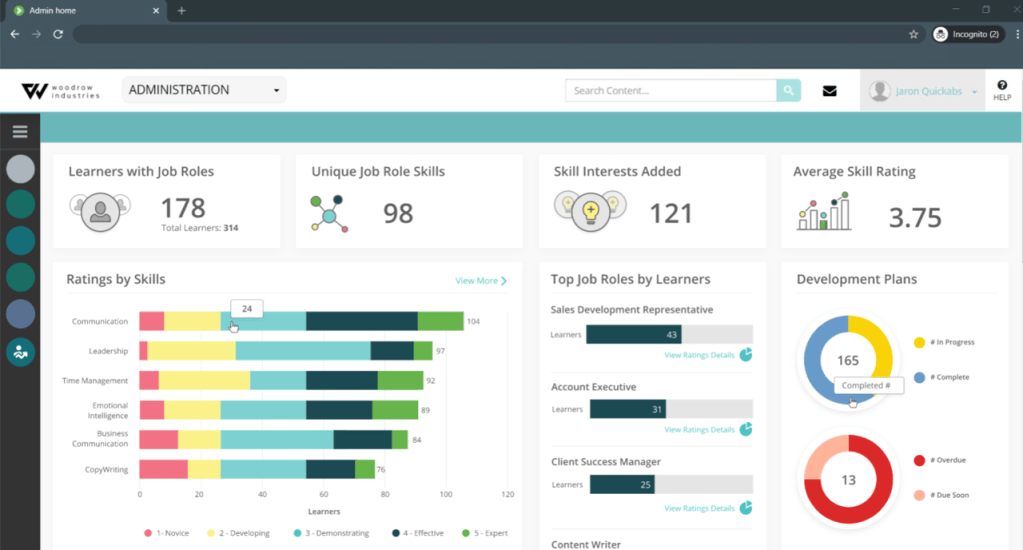

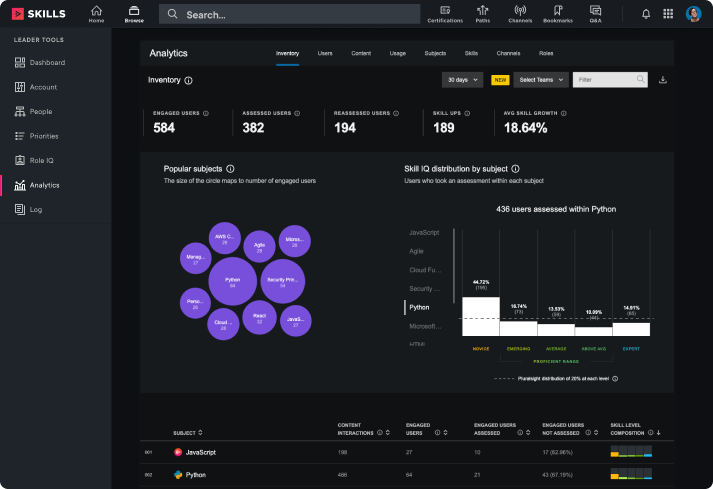

This is just a partial list and by no means extensive. From the metrics standpoint, though, the best one I have seen so far is Pluralsight. It looks sharp, it tells me the story around my learning/training of my employees, and it is fresh (although it also needs an update, just a slight bit). CrossKnowledge has a nice and slick metrics look, too, and ditto with Fuse. Biz Skills, that vendor again (Biz Library) metrics tell me part of the story, but the content to the job role to skills mapping ahead of time really sets it apart from everyone else. And it still baffles me why more vendors are not doing this – especially with their content marketplace providers.

Two Examples of Fresh Look around Metrics

Biz Skills

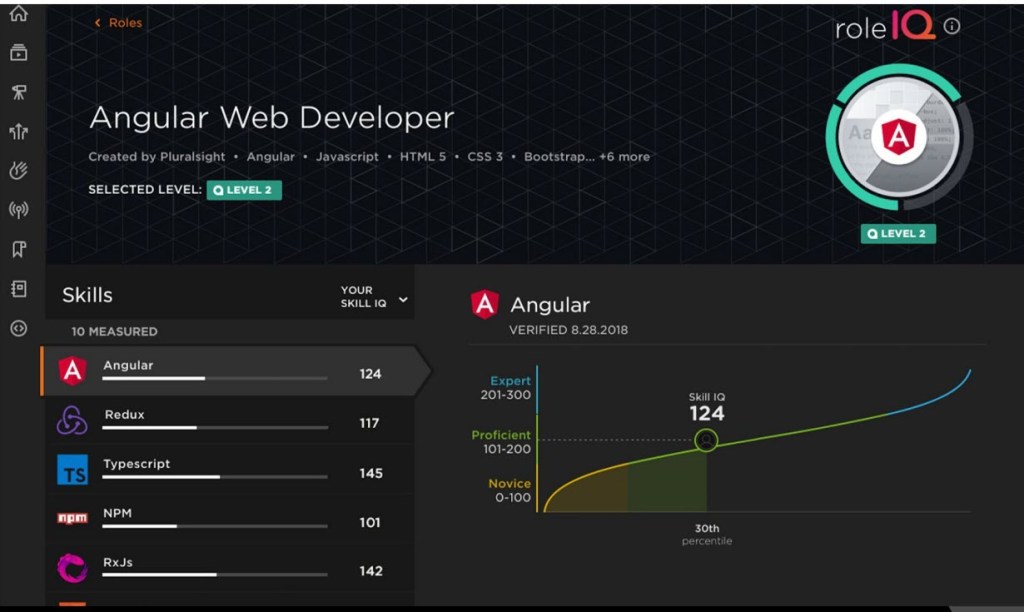

Pluralsight – Around Skills (it is actually on the learner side, but still, the UI is impressive

Granted, there is some data they need to add, but overall, still clear, concise, and visual, and the Skill IQ design clearly shows where the end-user is with their Skill IQ compared to being an “Expert.” There isn’t confusion there.

Both show off a stunning look. The dark design on Pluralsight, is not common in the space, although some vendors will offer it by early next year (giving the admin the option). Biz Skills, just is appealing. Pluralsight is very expensive. Biz Skills is affordable. Yet, each went with a different appearance, UI and UX.

Here is Pluralsight’s Metrics Design – Notice how it tells you, the admin, the person overseeing L&D or Training a story around your end-user’s learning?

Generative AI and The Admin Side

To me, the holy grail for Generative-AI and The Admin side goes like this:

a. The person overseeing L&D, Training or whatever department they come from enters in their use case(s). They can do one or many – but it has to be very detailed and to the point (essential for Gen-AI)

b. The learning system then identifies the administration functionality they will need to achieve and meet each use case(s) and the metrics that align directly with the use case(s). If the admin or whoever wants to update or go deeper, they identify what else would achieve that, and the system identifies it. The system can even offer recommendations around metrics or other functionality on the admin side that achieves the goals but from a different perspective. For me, this latter part would be around the metrics – specifically.

Why does it matter?

Most admins will never use all the functionality on your admin side, even if it benefits them. The reason is pretty simple – they aren’t aware of it. Thus, they call you (the vendor) and ask how I do this or that (and if it is in the system, you tell them). Others never call and privately grumble to themselves.

I know that overwhelmingly the vendors will eye Gen-AI around the learners. I get it, and they should, of course. But, the admin side, especially with those use case(s), can really impact in a way that has never been done before. When you consider that more and more folks want full automation or pretty darn close to it (always include a human here), the Holy Grail makes complete sense.

The Coaches

This is already in play with some systems and will continue. While I prefer the mentoring angle (which is different than coaching), especially with cohort-based learning – which if your UI/UX doesn’t show it off, then seriously, you are in the wrong business – the coaching side, is just in blah and yuck and tells me what it exactly?

You can do what I am going to provide with the mentors, too (since it is applicable)

- Too many systems focus only on selecting the coach/mentor. Sure, you can see their skills, maybe job role, and their profile too.

- After that, it is everywhere and nowhere

I have yet to see one that tells me the real story behind the success or lack thereof of these people.

Okay, they are an expert, but so what? Are they effective? Do the people who choose them feel as though they are really helping them, whether it is mentoring (mentors) or coaching around a specific or series of skills (coaching)? Can the learner rate them? Is the scale or rating clear and defines what each rating means for the learner? Can others see the ratings under the coach/mentor profile or somewhere in the system?

On the Admin side – with those metrics

Where is the info about the coach/mentor? If I am running L&D, Training, or whatever department – whether it is for customer training, association members, students, employees – office or blue-collar, I would want to know the following – because it is the difference between legit success or failure.

- The most popular questions learners ask coaches/mentors.

- The most popular topics that coaches/mentors cover or provide to the learners.

- Ratings of each coach/mentor – Around Response time (pretty crucial), Feedback or Assistance (which can be tracked from the learner rating – if they can pick a few), Rating from each learner who selected that a coach/mentor and overall rating, comparison data between each coach/mentor tied to the topic(s), with response time too.

- Other data that tells you the story – the real one around each coach/mentor

- Down the road, if people hire a coach/mentor, then a financial number of cost versus rating and success or failure. Pretty relevant here.

Why those metrics?

The reason learning systems were created was because ILT (a prominent form of learning/training) did not tell those overseeing L&D and Training what their people knew or didn’t know. What they really were interested in or not. In other words, metrics. They used to go really deep, including what pages someone was going into, how often – relevant data. Not just that they entered the course or piece of content. Those Likert Scale evaluations were worthless. The comments angle was brutal – “I hated the food,” “I didn’t like the person’s tie,” “Why wasn’t there more food,” “It was too hot or cold in there.” Relevant? No. Insightful? No.

When I think of coaches/mentors, I often think of speakers who present at shows. Each of them is identified as an expert in whatever. And yet, if you polled people afterward or listen to feedback as they are walking around, inevitably you will hear that the person didn’t know the topic, that they were boring, that the information relayed didn’t help the attendee, it was a waste of time, and so forth.

But why praytell, when you have coaches/mentors in your system, does that information you clearly present not at play? An expert means they are an expert. Nothing more. It doesn’t say how effective they are, how well they present the information to the end-user, how well they follow up or whether or not they have empathy (something that should be tracked, by the way, using data points), and so on.

Nobody seems to care, yet, you should. Your learners are selecting these people, and thus, the expectation bar is set high. This refers to where I press that your metrics must tell you the story of your learning/training.

Oh, and ignore the Quora angle here, which some vendors go with coaches. Coaching or Mentoring is meant to be one on one. Not one on everyone in the system.

Bottom Line

There are going to be people who say, “Well he is saying refresh, but his blog isn’t updated.” And you are right, but it is in the works (already being developed) My RFP template major overhaul and design approach (in the works).

Do I need to do this? No. Not really, nobody is asking can you do this, or why haven’t you done it.

Thus I could sit by and do nothing. Be happy with what and where it is at.

But, then, why would I want to?

Refresh is needed. Retread is not.

And BTW, the self-directed, bite-sized learning piece so many vendors pitch as though this is what folks want – WBT back in the late 90s was created specifically for that – self-paced was the term, bite-sized was called micro-learning, and metrics told a story (for most systems).

It was new. It was different.

And it was risky.

Yet it worked.

As your new refresh, new approach and perspective will.

And if you are leery about doing it, then tell you what

Move aside.

E-Learning 24/7